Making Heroku Fast with the CloudFront CDN

A faster website results in a better experience and more engagement for your users.

In this experiment we see how easy it is to deploy Discourse, an open-source discussion platform written in Rails 5, to Heroku. We then see how easy it is to add the AWS CloudFront Content Delivery Network (CDN) via the Heroku “Edge” Add-on.

The result is a website that benchmarks 2 times faster with no changes to the app code.

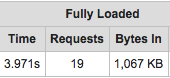

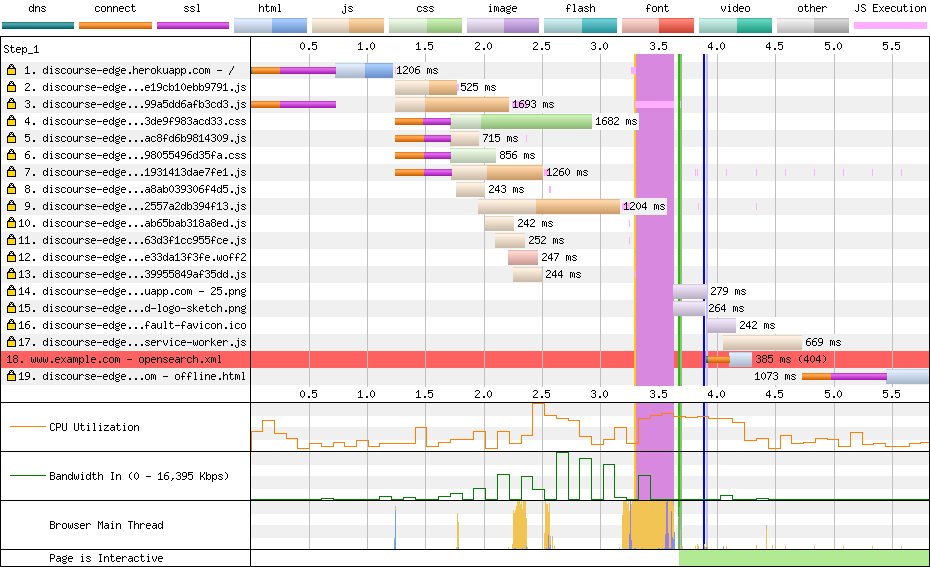

Before: 4 seconds

Before: 4 seconds

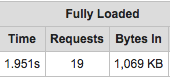

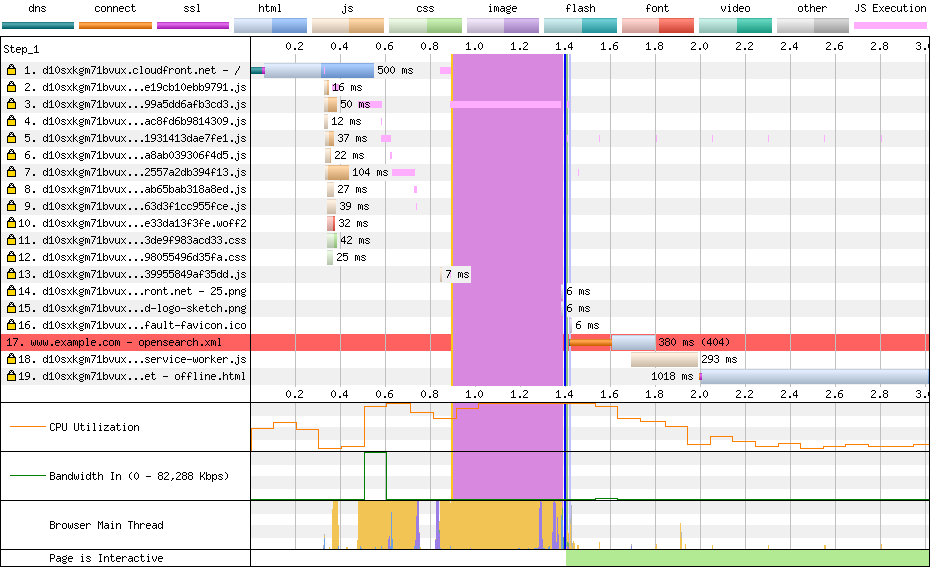

After: 2 seconds

After: 2 seconds

The CDN gives us this performance by instantly returning cached content over a single HTTP/2 connection, instead of making many requests to the Heroku dyno.

Discourse

For this tutorial we’ll use the Discourse discussion platform for our Heroku app. Discourse is built with Rails 5 and it’s source code is available on GitHub.

You can deploy Discourse to Heroku by following the recipe in this Gist. While it doesn’t deploy directly out of the box, the patches and config needed to run on Heroku are minimal. The most important thing is to configure Rails to serve static assets directly:

$ heroku config:set DISCOURSE_SERVE_STATIC_ASSETS=trueThen a git push heroku master and we have a Discourse app.

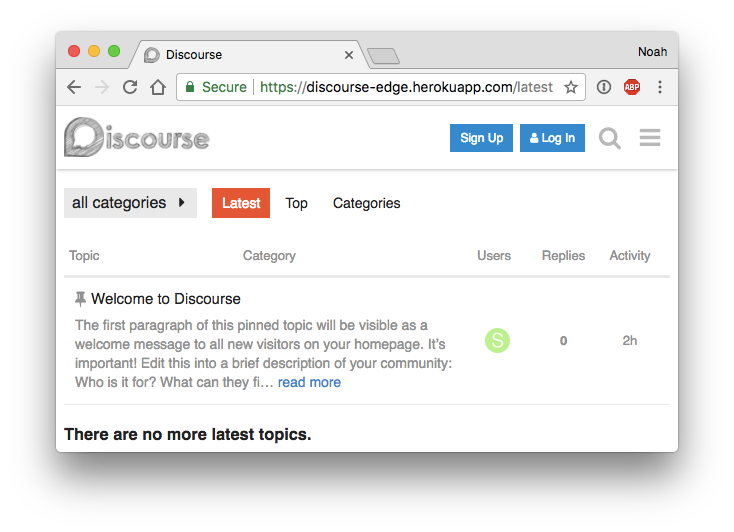

Discourse on Heroku!

Discourse on Heroku!

Caching with a Content Delivery Network

Forum software like Discourse is the perfect use case for caching with a CDN. On a discussion forum we expect many more people to read a discussion vs. posting a new message to a discussion.

So in a perfect world almost every web page view can be a “hit” and served instantly from content cached in a CDN. Only after someone submits a new post will we need to fall back to the app server and database to dynamically generate a new web page.

So lets add the the AWS CloudFront CDN, via the Edge Add-on, and see how it performs.

$ heroku addons:add edge:test

Creating edge:test on ⬢ discourse-edge... free

Successfully provisioned 30cdc82b-899a-47cd-8116-ce1ac06dedec

Created edge-parallel-57846 as EDGE_AWS_ACCESS_KEY_ID, EDGE_AWS_SECRET_ACCESS_KEY, EDGE_DISTRIBUTION_ID, EDGE_URLNote that it takes about 10 minutes to configure the global CDN infrastructure. Run heroku addons:open edge to check the Edge Dashboard to see if the CDN status is still “In Progress”. When the status is “Deployed”, try accessing the app via the EDGE_URL.

$ heroku config:get EDGE_URL

https://d10sxkgm71bvux.cloudfront.net

$ curl https://d10sxkgm71bvux.cloudfront.net

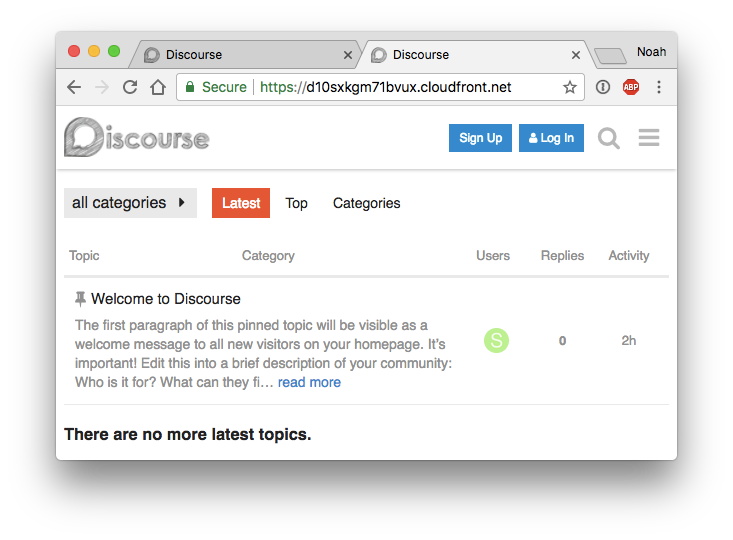

... Discourse via CloudFront!

Discourse via CloudFront!

Performance

Now we can compare performance between herokuapp.com and cloudfront.net. For this, I used the excellent WebPageTest service. This benchmarks a website with an actual web browser from many locations around the world and reports just about everything about the network requests and rendering speed.

Here are the raw results for Heroku vs CloudFront for a test from Singapore.

On Heroku we are seeing 3.883 seconds to render the webpage. Through CloudFront it’s 1.394 seconds. That’s more than twice as fast. How can it be?

First, look at the “waterfall” view:

Heroku responses of 100 to 1000ms in serial

Heroku responses of 100 to 1000ms in serial

CloudFront responses of 10 to 100ms in parallel

CloudFront responses of 10 to 100ms in parallel

On Heroku (top) we see the requests taking 100s or 1000s of milliseconds served in a largely serial fashion. The Rails web server takes a significant time serving each static file from the Dyno.

On CloudFront (bottom) we see the requests taking <100 milliseconds served in a largely parallel fashion. The CDN has cached the content and is returning it extremely quickly.

Next, look at the “connection” view:

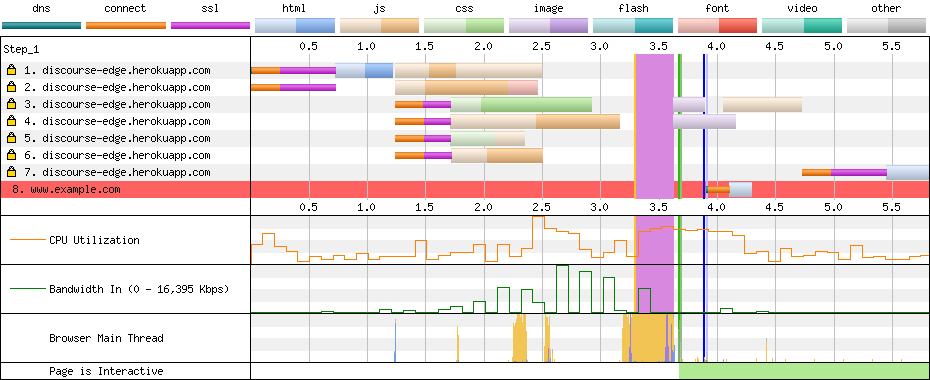

Heroku uses 7 connections to the dyno

Heroku uses 7 connections to the dyno

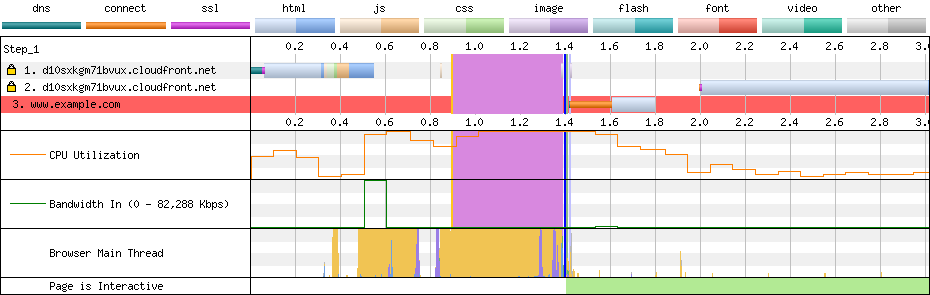

CloudFront uses 2 connections to the CDN

CloudFront uses 2 connections to the CDN

On Heroku (left) we see 7 connections to the Dyno. Each connection adds more time spent in the network and SSL layer and competes for the max concurrent connections our Dyno can handle.

On CloudFront (right) we see only 2 connections to the CDN. This is thanks to the HTTP/2 protocol. A browser can make a single connection to CloudFront, and only pay a single penalty for network and HTTPS negotiation, and thanks to HTTP/2 many requests and responses are “multiplexed” over the connection.

Conclusions

Here we see how easy it is to speed up a real Rails 5 app by accessing it via the CloudFront CDN. Serving assets out of a cache vs our Dyno, and multiplexing responses over HTTP/2 give our users huge performance boosts.

It’s amazing that after we run heroku addons:add edge our website that is 2 times faster with no changes to the app code.

Of course your mileage may vary. In this experiment we use an app that is extremely favorable to caching. We also used a single hobby Dyno for our origin app, where of course we could add more performance by more and faster dynos.

Finally a CDN does have some tradeoffs. For a real-world app, we likely would need to consider new things like:

- The HTTP

Cache-Controlheaders our app returs (learn more) - The “time-to-live” (TTL) settings of the CDN (learn more)

- Object invalidation in the CDN on deploys (learn more)

Still, this is a very promising start…